Goals

Ever since the beginning of Knights Province project I wanted it to be visually pleasing. Slightly cartoonish style with bright colors, semi-realistic soft lighting and shading. Ambient Occlusion (AO) is one of those shading effects that add depth and sense of scale to whole scene, tying objects together and highlighting details.

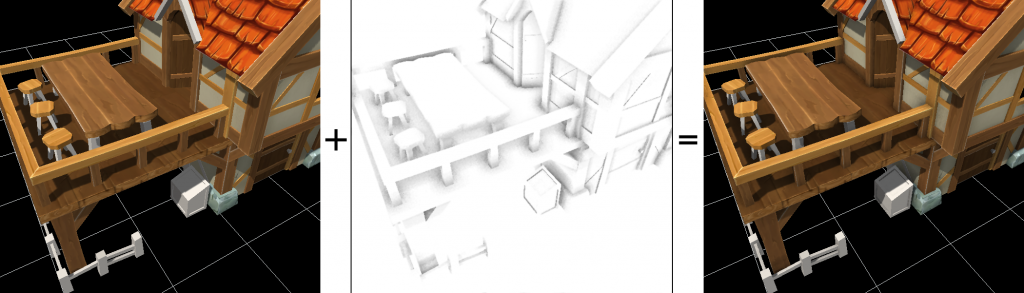

SSAO composition

What is AO

So what is AO exactly and how does it relate to real world? AO is an effect of ambient light spreading between objects (hence the effects name) and also of some dirt accumulation naturally occurring in crevices. Counter to popular belief, AO is not 100% real-world based. Take a look at walls-ceiling corner – it has no dark gradient on it (even worse, some corners seem to gain brightness near the edge due to light reflecting from other side). Still, AO is a nice artistic effect that adds depth to the picture and gives objects more “weight”, making details to stand out more.

How AO is done

AO can be done in several different ways depending on the use-case. It could be raytraced and recalculated every frame (typical for offline rendering, what 3DSMax and Blender and other rendering apps usually do) when quality is the king. It offers superior quality at a cost of speed (typical scene can take 30+ seconds or minutes to calculate). Games can not afford this. For games, AO could be raytraced once and baked into lighting textures (so-called lightmaps). This offers greater speed. Downsides are – only static objects could be baked. Dynamic objects won’t have proper AO on them and on objects they interact with. Oftentimes older games combined lightmaps with fake shadow patches below actors feet/wheels/etc. That looked okay in 2000’s when computational resources were limited. Now, as the computational powers of GPUs have sufficiently increased, dynamic solutions for games could be made – SSAO, HBAO, HBAO+ and other variants. They don’t come that close to offline rendering solutions, but still offer good enough compromise between performance and looks, with some shortcuts taken.

Options

Here’s a short list of options I’ve considered viable for the Knights Province:

- Pre-baked lightmaps – look best on static objects, but don’t cope well with dynamic ones, needing lots of special-case “hacks” convoluting the render pipeline. They also require either separate UV map or unique UV mapping (which does not mix well with houses reusing textures and materials, cloned trees and terrain)

- Dynamically baked lightmaps or vector maps – offer more general approach to the problem, but still require a lot of hassle and have noticeable flaws.

- SSAO and its variants – is even more general solution. It works on top of any geometry and does not care about how it was combined. What you see is what gets AOed – It’s that simple.

Why did I pick SSAO

I’ve picked SSAO for its generality and simple SSAO implementation specifically for its widespread popularity, which must mean that it’s a good enough compromise between ease of implementation, performance and picture quality. There are many tutorials, common problems and solutions to them are well known too. Optimization techniques are plentiful as well.

How SSAO works in general

SSAO works by basically checking each pixels surroundings within a small sphere, to see if they block ambient light from reaching the surface. The more of those surrounding are covered by other objects, the more occluded the pixel is and the darker it will be. Ideally, each surfaces pixel checks whole multitude of directions and ranges to see how occluded it is from ambient space. In practice, this is very costly operation, so only handful of tests are made and their coarse results get averaged out.

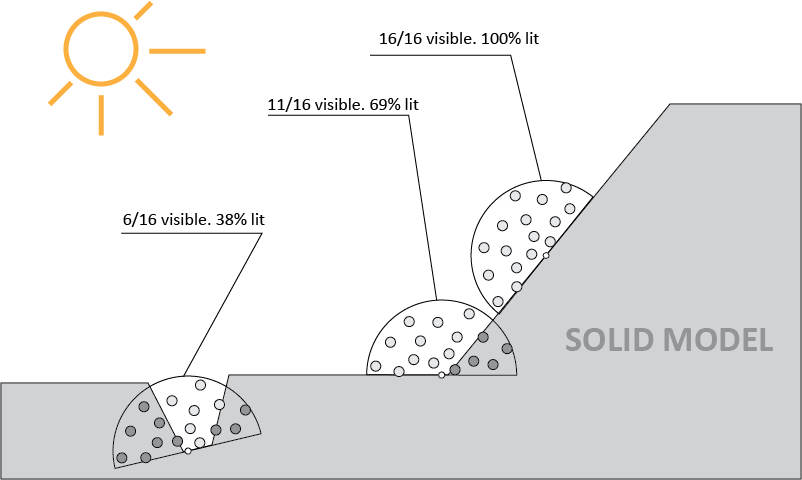

Illustration below shows 2D version of SSAO where 3 surface points are checked with hemispheres oriented towards surface normal (reasonable optimization):

SSAO needs DR

In older times, when render was simpler, GPUs were rendering frames in one go and would calculate each pixels color by factoring everything at once. Now SSAO samples a lot of pixels over and over (even for coarse results, every pixel needs to test 16+ surrounding pixels to figure out its shading). Because of that, it makes sense to prerender required information and access it from some buffer. Providing for that is a complex rework of the rendering pipeline. Approach with rendering intermediate buffers is called Deferred Render (DR). Where as before the game drew objects one by one in one go, now all objects need to be drawn into temporary buffers (typically that’s position, normal to surface, color) to later combine them into final picture. DR is already sort of in the game – shadowmaps, water reflections and fog of war are prepared in separate buffers and combined into final rendering.

DR plan

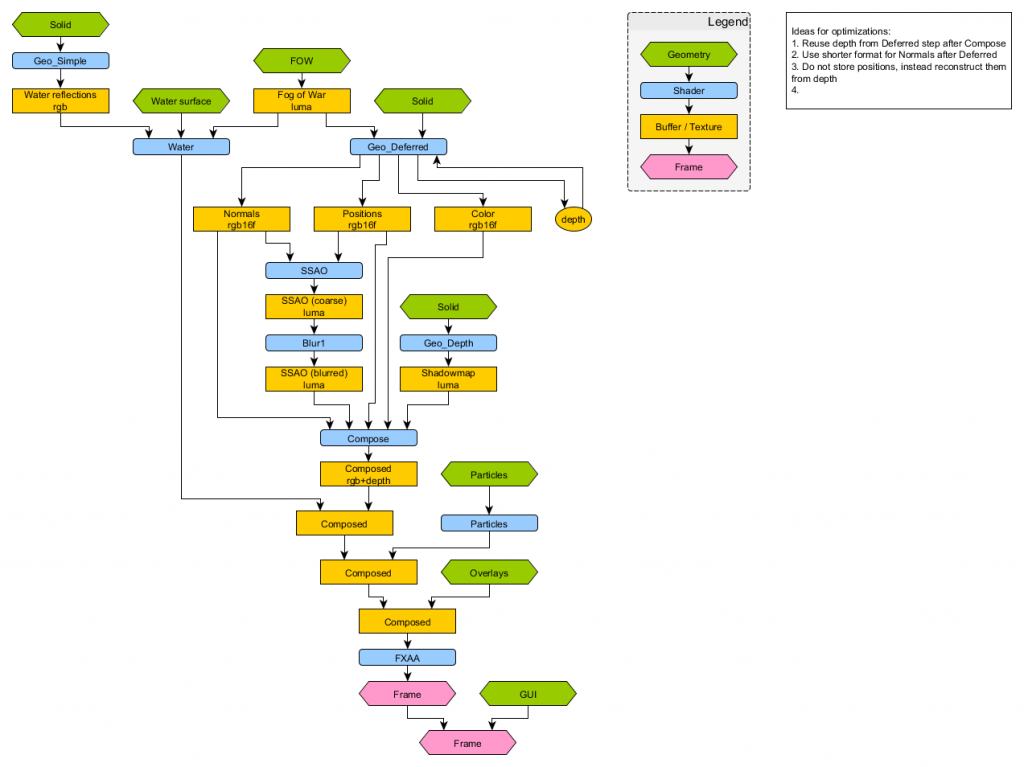

Render pipeline needed some planning in order to find correct place and sequence for each operation. Here’s how render pipeline is planned to be with DR and SSAO:

Current render structure of the game

SSAO optimizations

SSAO is very resource hungry algorithm – it needs to test dozens of pixels for every drawn pixel. Optimizations are vital. Most straightforward ones are – sampling only those points that are above tested surfaces (testing within hemi-sphere instead of whole sphere of directions). Then comes in randomizing of samples – it is done by rotating sampling hemisphere for each next pixel – thus output is more evenly distributed, but noisy. Blurring helps with reducing noise. SSAO has a number of controls, allowing to trade-off quality vs. performance – e.g. reducing number of test-samples used, reducing blur radius. At the moment I plan to add 3 SSAO setting – off, low and high quality.

DR and AA

Unfortunately DR does not mix well with traditional AA techniques, since DR relies on pixel perfect information in buffers, only straightforward AA could work (that is rendering frame X times larger and downscaling it), which is unfortunate for SSAO where each new pixel needs whole lot of sampling from neighbour area. Luckily there are known AA algorithms well suited for DR. FXAA is quite popular and easy to implement.

DR and transparency

DR has certain difficulty coping with semi-transparent surfaces (STS), since depth buffer can only contain a single value per pixel, it is either of surface behind the STS or the STS. Which means that either the surface behind or the STS one gets shaded wrongly. There are smart techniques to deal with that (e.g. using a checkerboard pattern and storing every 2nd pixel from other surface), but for the RTS that has quite few STSs (namely smoke particles and water surface) it is easier to deal with the issue by simply rendering STS on top of DR/AO geometry. STS generally don’t need AA too, since their transparency means they don’t create a lot of sharp pixels in the first place. Even better – STSs rendered on top of normal geometry cover some of it, thus reducing amount of sharp edges that AA will need to smooth out.

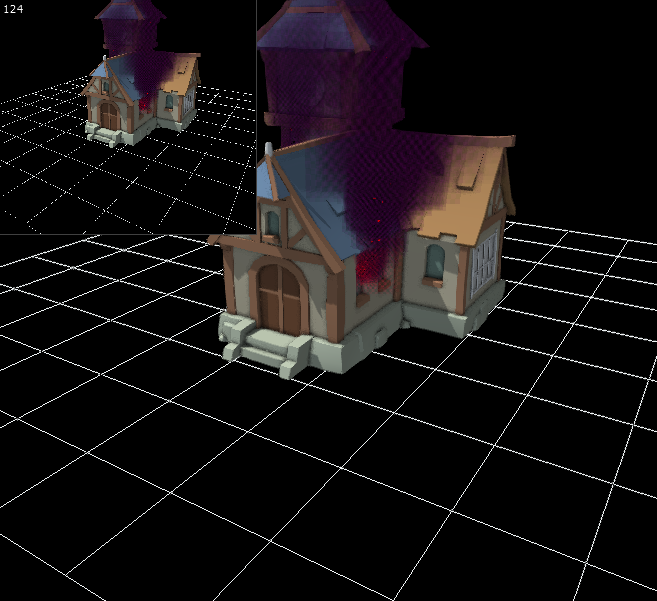

Recombining the render

Once AO is computed and applied, the render needs to return back to “normal” functioning to render STSs. This is done by writing RGB and depth values back into a buffer and rendering STSs using default depth test.

(Particle textures are replaced with checkerboards for debug)

In the following articles (which are already in works) I’ll cover FXAA and general render perspectives.

P.S. Thanks to Thibmo for reviewing this article 🙂

I’m always amazed by this project and its progress.

is part 2 coming this weekend?

Good idea, but I was very busy this week, so it’s much likely to come later.

Thanks for asking! )

Looks pretty good so far 🙂

Keep up the good work. I was wondering is there any possibility that you put Knights Province on Kickstarter too? Maybe it opens new ways to get help from another Artists and trust me, I saw many bad project on there which were funded and finnished, so I guess Knights Province is a very good project and the world of gamers needs to know about it.

Greetings from Germany

Thank you. The game is not ready for Kickstarter yet, as it is still in Alpha stage. I’ll think about it when it becomes at least Beta.

i guess the 2nd part is coming 13/08.

I’ve got another one ready for 13/08 😉